Ride the Wave – Model Health Monitoring

How do data science and ocean tides correlate? A predictive analysis could be performed on the movement of currents and the ebb of tides, but let’s instead consider “drift” in relation to model health, the occasional subtle changes that may, or may not, occur over time within a model.

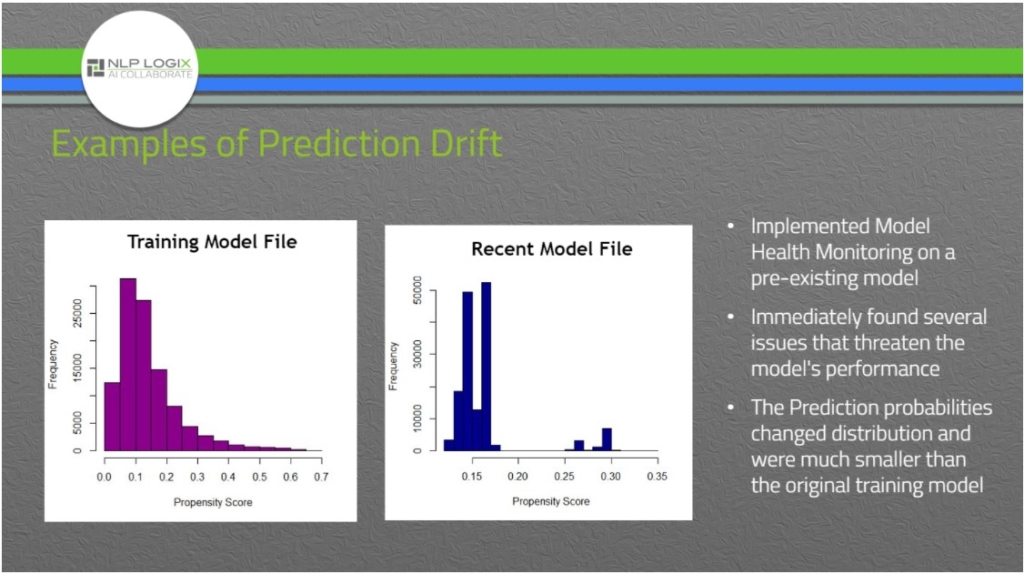

Drift is evidence that the data going into or coming out of a model is changing over time. Subtle changes may occur within models that often go unnoticed. Recognizing the warning signs of possible shifts is essential in model health monitoring. In a recent presentation called Catch My Drift, NLP Logix Modeling & Analytics Team Lead, Mary Sheridan, spoke about feature drift and why it should be an important part of model health.

Sheridan states, “Over time, many things can cause a model’s performance to degrade, and we are often not privy to this information until it is too late. One way to help detect whether a model may be at risk is to monitor changes in its features over time. If we see the data driving the model’s predictions is changing, that can be an indicator that the model’s predictions may not be as accurate or the model may need to be retrained on this new data.”

Common causes of degrading model health:

- Data used for training does not match the greater population

- The behaviors of the population of interest naturally change over time

- Unexpected events (i.e. impacts of COVID-19) can change expected behavior

- New segments may emerge in the population over time

- Source data may change due to new systems or errors

Like a lifeguard on watch, model health monitoring is a safeguard to ensure models stay true to their course. Through nature, gentle shifts may appear if the model is left unsupervised. Shifts are often not expected; however, when tides show change, we should proceed with caution to ensure the model is performing as expected. NLP Logix Client Success Data Analyst, and former beach lifeguard, Brandon Cleary compares model health to cautionary monitoring of the beach tides by stating, “Beach flags are commonly yellow, or maybe even red. The ocean is never fully safe, so a green flag is not always flying.”

Scanning models for signs of erosion is a priority for a model to stay on its true course. How should model health be monitored? Grab some shades, and take a look.

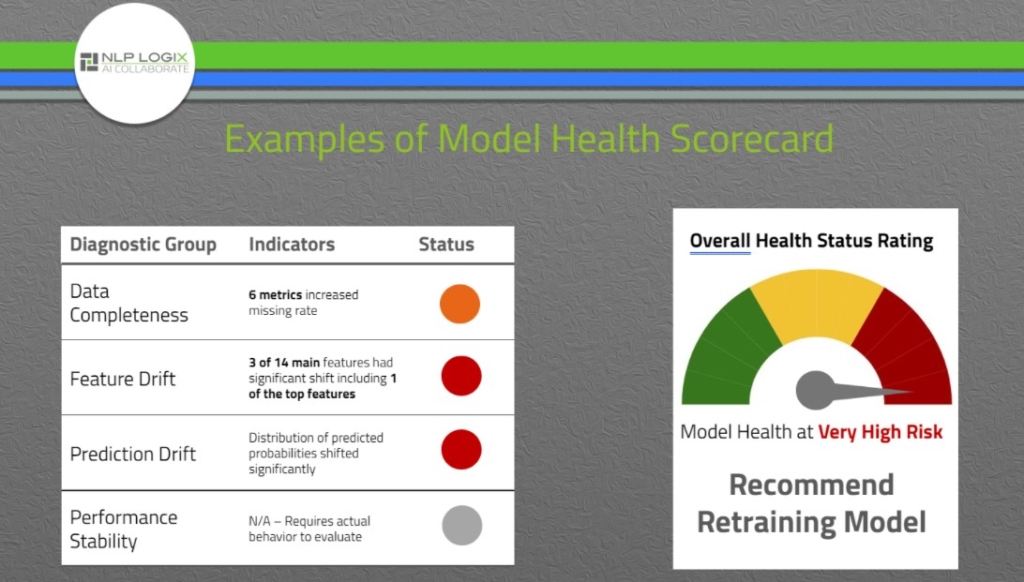

- Data Completeness – Ensure source data is complete and matches the expected format as data used for training the model.

- Feature Drift – Ensure characteristics of the data being scored by the model is similar to the data used in the training model.

- Prediction Drift – Ensure the distribution of the predicted probabilities is similar to the training data.

- Performance Stability – Ensure the model performs optimally and as intended (when labeled data is available).

Being vigilant and aware of model health provides more than water wings; it provides the full scuba gear needed to dive into the analysis. Learn more about Model Performance Monitoring. Model Performance Monitoring • NLP Logix